Agentic AI Readiness Checklist 2026: Is Your Enterprise Really Ready

Everyone Is Talking About AI Agents. Almost Nobody Is Ready for Them.

What Agentic AI Actually Is (And What It Is Not)

Before the checklist, let us be precise, because “agentic AI” is being applied to everything right now, and most of it is not actually agentic.

A true AI agent does three things that a standard AI assistant or chatbot cannot. It sets its own subgoals, takes autonomous actions across multiple steps and systems, and adapts its approach based on feedback, all without requiring a human prompt at every step. It does not just answer questions. It executes tasks.

As Microsoft’s AI Agents Hub describes it, agents range from simple prompt and response helpers to fully autonomous agents that execute entire workflows from start to finish. The platforms enabling this at enterprise scale today are Microsoft Copilot Studio for low code agent building, Azure AI Foundry for enterprise grade deployment, and Microsoft Agent 365, Microsoft’s control plane for observing, governing, and securing agents across your entire organization. Agent 365 is generally available since May 1, 2026, at $15 per user per month.

Gartner warns that many vendors are engaged in “agent washing,” which means rebranding existing chatbots, RPA tools, and AI assistants as agents without delivering true agentic capabilities. Gartner estimates only about 130 of the thousands of companies claiming to offer agentic AI are building the real thing.

The first question for any enterprise leader is not “which agent platform should we choose?” It is “are we actually ready to deploy agents at all?”

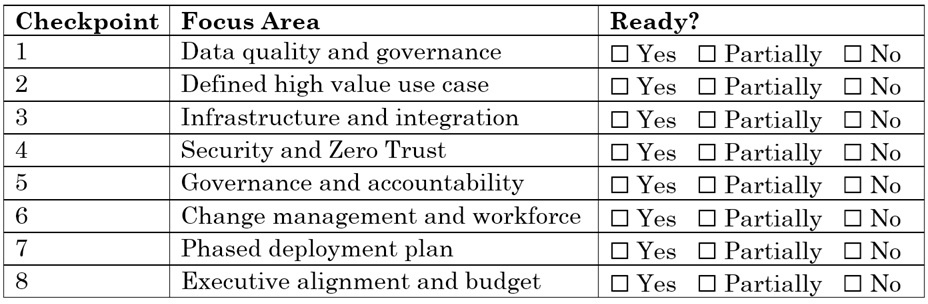

The Enterprise Agentic AI Readiness Checklist

Work through each of the eight dimensions below. Be honest. The gap between where you think you are and where you actually are is usually where agentic AI projects die.

Checkpoint 1: Data Quality and Governance

Why this comes first: AI agents are only as good as the data they act on. An agent that has access to inconsistent, stale, or poorly governed data will make confident sounding decisions based on wrong information, at autonomous speed, across multiple systems, without a human catching each mistake.

Gartner’s 2026 Data and Analytics predictions are stark: by 2030, 50% of AI agent deployment failures will be due to insufficient governance platform enforcement. Most of those failures are seeded right now, in poorly governed data environments where agents are being deployed anyway.

What to check: Is your data consolidated and accessible, or siloed across disconnected systems? Do you have a data owner assigned to each major data domain agents will touch? Are your SharePoint, OneDrive, and cloud storage environments free of stale, duplicated, or overpermissioned content? Do you have a Microsoft Purview or equivalent data governance layer in place?

What readiness looks like: A data governance policy exists, sensitivity labels are applied, data owners are named, and there is a process for regular data quality audits. Agents should only operate on data you trust enough to act on autonomously.

Cloud 9 Note: A Free Data and AI Readiness Assessment from Cloud 9 includes a full review of your Microsoft 365 data governance posture, identifying oversharing risks, permission gaps, and data quality issues before agents surface them at scale.

Checkpoint 2: A Clearly Defined, High Value Use Case

Why this comes second: Gartner’s research is direct: “Most agentic AI projects right now are early stage experiments or proof of concepts driven by hype and often misapplied.” The most common failure mode is not a technology failure. It is deploying an agent for a use case that did not need one.

Many use cases positioned as “agentic” today are actually better served by a simpler chatbot, an RPA workflow, or a Copilot prompt. Agentic AI delivers its highest ROI in tasks that are multistep, require reasoning across multiple systems or data sources, are high frequency, and carry meaningful business value when automated.

What to check: Can you name the specific workflow this agent will automate, not “improve productivity” but the precise sequence of steps? Is this workflow currently performed by humans at significant volume and cost? Does it require reasoning across multiple systems rather than just a single database lookup? Have you quantified what successful automation would be worth in hours saved, error reduction, or revenue impact?

What readiness looks like: A one page use case brief exists with the workflow described step by step, the current human cost in hours per week multiplied by loaded salary, the expected agent throughput, and the success metric you will measure on day 30, day 60, and day 90.

Checkpoint 3: Infrastructure and Integration Readiness

Why this matters: AI agents work by taking actions across systems, querying databases, calling APIs, reading documents, updating records, and sending communications. If those systems are not accessible via secure, well documented APIs, or if your infrastructure cannot support the latency and uptime requirements of autonomous workflows, your agent will fail in production.

Microsoft’s Azure AI Foundry, used by more than 80,000 enterprises including 80% of Fortune 500 companies, is built to connect agents to enterprise systems via over 1,400 connections to platforms like SAP, Salesforce, and Dynamics 365, as well as custom Model Context Protocol tools. But those connections only work if your systems are integration ready.

What to check: Do the systems your agent will interact with have stable, documented REST APIs or MCP compatible endpoints? Is your Azure environment configured with appropriate role based access control for agent identities? Do you have network isolation and data residency controls in place for agent traffic? Is your Azure infrastructure scaled to handle agent workloads, including burst scenarios where many agents operate simultaneously?

What readiness looks like: Integration points are documented, APIs are tested, Azure environments are provisioned with agent specific identity and networking controls, and there is a load testing plan for production agent traffic.

Checkpoint 4: Security and Zero Trust Architecture

Why this is nonnegotiable: An AI agent that takes autonomous actions is also an autonomous attack surface. If an agent is compromised through prompt injection, model tampering, or misconfigured permissions, it can exfiltrate data, corrupt records, or execute harmful workflows at machine speed.

Microsoft’s Agent 365 is specifically designed to address this. It provides runtime threat protection, prompt injection detection, policy based controls, and a unified control plane for discovering, governing, and securing agents across your entire organization, including third party and local agents. Microsoft also introduced six dedicated Azure Copilot agents for cloud operations, each built with governance, compliance auditing, and role based access control enforcement at their core.

What to check: Does each agent have its own dedicated Microsoft Entra identity with least privilege access rather than a shared service account? Are sensitivity labels, DLP policies, and information barriers enforced for data agents can access? Do you have a process for detecting and responding to prompt injection attacks against agents? Is Microsoft Defender or an equivalent solution configured to monitor agent activity for anomalous behavior? Do you have a human in the loop gate for agent actions above a defined risk threshold?

What readiness looks like: Every agent has a dedicated Entra identity. Agent permissions are scoped to minimum required access. Security monitoring is configured for agent traffic. High risk agent actions require human approval before execution.

Checkpoint 5: Governance Framework and Accountability

Why organizations skip this and why they regret it: Gartner’s 2026 CIO Agenda notes that 94% of CIOs expect major changes to their plans within 24 months, yet only 48% of digital initiatives actually meet or exceed business targets. The gap between intent and outcome is almost always a governance gap, and with agentic AI, the stakes are higher because agents act autonomously.

Who is accountable when an AI agent makes a wrong decision? Who owns the agent’s outputs? Who reviews agent logs? Who has the authority to shut an agent down? If you cannot answer these questions clearly today, you are not ready to deploy agents.

What to check: Is there a named AI agent owner for every agent being deployed, meaning a human accountable for its behavior and outputs? Does your organization have a written AI governance policy that covers autonomous agents? Is there a process for logging, auditing, and reviewing agent decisions, especially for regulated workflows? Do employees know how to report unexpected or harmful agent behavior? Have legal and compliance reviewed the use cases where agents will take actions?

What readiness looks like: An AI governance policy exists. Every deployed agent has a named owner. Audit logs are configured and reviewed on a regular cadence. Legal and compliance have signed off on agent use cases touching regulated data or decisions.

Checkpoint 6: Change Management and Workforce Readiness

Why technology is the easy part: The hardest part of deploying AI agents is not building them. It is getting your workforce to trust them, use them correctly, and not work around them. Employees who do not understand what an agent is doing, or who fear it is replacing their role, will actively avoid or undermine it.

This is not a hypothetical. Gartner research recommends that to get real value from agentic AI, organizations must focus on enterprise productivity rather than just individual task augmentation. That requires workforce buy in. Microsoft’s own partner guidance frames successful agent deployment as a three part model: technical readiness, delivery capability with governance and compliance, and organizational readiness for operating at scale.

What to check: Have the employees whose workflows agents will touch been involved in defining the use case? Is there a clear communication plan explaining what the agent does, what it does not do, and how human oversight works? Have you identified internal AI champions who will advocate for adoption in each team? Is there a feedback mechanism for employees to flag agent errors or unexpected behaviors?

What readiness looks like: Affected employees participated in use case definition. A communication and training plan is written and scheduled. Internal champions are identified. A feedback loop is built into the deployment plan from day one.

Checkpoint 7: A Phased Deployment Plan, Not a Big Bang

Why starting small is the only way to start right: The organizations that successfully scale Agentic AI are the ones that earned the right to scale. They ran narrow, measurable pilots first, demonstrated value in a controlled environment, and built organizational confidence before expanding.

Gartner explicitly recommends that in this early stage, agentic AI should only be pursued where it delivers clear value or ROI, and that rethinking workflows from the ground up rather than bolting agents onto existing broken processes is the ideal implementation path. Microsoft Foundry is built to support exactly this: an agent development lifecycle with testing, evaluation, tracing, and staged deployment built into the platform before any agent goes to production.

What to check: Is your first agent project scoped narrowly, covering a single workflow, a single team, and a defined success metric? Do you have an evaluation pipeline in place to measure agent quality before expanding access? Is there a human override mechanism available at every stage of the pilot? Is there a clear definition of what “success” means at the 30, 60, and 90 day marks? Do you have an agreed escalation plan if the agent produces harmful or incorrect outputs at scale?

What readiness looks like: A Phase 1 pilot is scoped to one workflow and one team. Success metrics are defined and measurable. An evaluation framework is in place. An escalation and rollback plan is documented before go live.

Checkpoint 8: Executive Alignment and Budget Commitment

Why this is the last checkpoint and the most important: Every readiness dimension above requires sustained investment in data governance, security tooling, change management, and phased deployment. Without executive alignment and a realistic multi quarter budget, even well designed agentic AI programs stall.

Gartner’s CIO Agenda for 2026 is clear: CIOs who relentlessly pursue financial outcomes from technology initiatives, especially AI, are 25% more likely to be top performers, yet only 33% consistently do so. The organizations winning with Agentic AI are treating it as a strategic business investment with board level visibility, not an IT experiment with a one quarter budget.

What to check: Has the CEO or CFO been briefed on the agentic AI strategy and committed to a multi year investment horizon? Is there a dedicated AI budget rather than a line item borrowed from another project? Is the CIO or a C suite AI sponsor accountable for agentic AI outcomes in the business plan? Is there a plan for scaling successful pilots into production within a defined timeframe and budget?

What readiness looks like: Board level visibility exists for the agentic AI strategy. A dedicated multi year budget is approved. A named C suite sponsor owns outcomes. Success metrics are tied to business outcomes, not technology milestones.

Your Readiness Score at a Glance

Score yourself:

7 to 8 Yes: You have the foundation. Start your pilot now with a governed, narrow use case.

4 to 6 Yes: You are closer than most. Address the No items before scaling, not after.

0 to 3 Yes: Build the foundation first. A rushed agentic AI deployment will cost more to fix than to build right.

What Happens When Organizations Skip the Checklist

Gartner puts it plainly: the most common failure is that organizations cannot tell the difference between genuine agentic AI and “agent washing.” They invest in pilots that produce impressive demos but never reach production, because the data is not clean enough, the governance is not in place, the integrations do not hold, or the workforce was never brought along.

The cost is not just the budget line. It is organizational trust in AI as a category, which then makes the next, better scoped attempt harder to fund and harder to adopt.

The organizations that are succeeding with agentic AI in 2026 are the ones that answered “yes” to most of this checklist before they started, not after their first failure.

Want to know exactly where your organization stands? Cloud 9 Infosystems offers a Free AI and Cloud Readiness Assessment that maps your environment against every dimension of this checklist, with specific, prioritized recommendations from our Microsoft certified team. No obligation, no sales pitch. Just clarity on where you are and what to fix first.

Where to Start on the Microsoft Stack: A Quick Platform Map

If you are a Microsoft 365 enterprise and have cleared most of this checklist, here is how the Microsoft agentic AI stack maps to your deployment journey.

For building agents without code: Microsoft Copilot Studio lets you build and deploy agents connected to your Microsoft 365 data and Power Platform workflows without writing a single line of code.

For building agents at enterprise scale: Azure AI Foundry is a fully managed platform for building, deploying, and scaling agents with enterprise security, identity, observability, and compliance built in from the start.

For governing and securing every agent: Microsoft Agent 365 is the control plane for managing every AI agent in your organization, including agents built on Copilot Studio, Azure AI Foundry, and third party platforms. It provides discovery, inventory, governance policies, runtime threat protection, and audit logging across your entire agent ecosystem. Generally available from May 1, 2026, at $15 per user per month.

For organizations ready for the full Frontier stack: Microsoft 365 E7, announced in 2026, brings together Microsoft 365 Copilot, Agent 365, Microsoft Entra Suite, and advanced Defender, Intune, and Purview capabilities into a single enterprise package at $99 per user per month.

Cloud 9 Infosystems is a Microsoft Solutions Partner with 16 plus years of experience helping enterprises deploy, govern, and optimize the full Microsoft stack, including Agentic AI solutions built on Copilot Studio and Azure AI Foundry.

The Bottom Line for Enterprise Leaders

Agentic AI is not hype. Gartner projects 40% of enterprise applications will include task specific AI agents by end of 2026, and by 2028, at least 15% of day to day work decisions will be made autonomously by AI agents. The enterprises that build the right foundation today will be the ones that capture that value. The ones that rush will end up in the 40% that cancel.

Use this checklist. Be honest with your score. And if you have gaps, fix them systematically, not in parallel with a rushed deployment.

Cloud 9 Infosystems helps US enterprises assess their Agentic AI readiness, build the governance and data foundation, and deploy production ready agents on the Microsoft platform, from Copilot Studio pilots to full Azure AI Foundry deployments. We have done this for over 16 years across healthcare, financial services, government, and enterprise IT.

→ Schedule Your Free AI Readiness Assessment

Frequently Asked Questions

What exactly is the difference between an AI assistant like Copilot and a true AI agent?

A Copilot or AI assistant responds to your prompt and generates an output, but you still take the action. A true AI agent, as defined by Microsoft’s AI Agents platform, can set subgoals, call tools, access data, make decisions across multiple steps, and execute actions autonomously without requiring a human prompt at every step. The difference is agency: an assistant advises, an agent acts.

Why are so many agentic AI projects failing right now?

Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027 due to escalating costs, unclear business value, and inadequate risk controls. The core issue is that most organizations are deploying agents before they have addressed data governance, security architecture, and workforce readiness. The technology is not the bottleneck. The foundation is.

What is Microsoft Agent 365 and do we need it?

Microsoft Agent 365, generally available since May 1, 2026, is Microsoft’s control plane for managing every AI agent in your organization, including agents built on Copilot Studio, Azure AI Foundry, and third party platforms. It provides discovery, inventory, governance policies, runtime threat protection, and audit logging across your entire agent ecosystem. If your organization is deploying more than one or two agents, Agent 365 is not optional. It is what prevents agent sprawl from becoming a security liability.

What is the difference between Microsoft Copilot Studio and Azure AI Foundry for building agents?

Copilot Studio is a low code platform ideal for business teams building agents connected to Microsoft 365 data and Power Platform workflows. No developer is required for many scenarios. Azure AI Foundry is the enterprise grade, pro code platform for building complex, multistep agents that require custom integration, advanced security controls, multiagent orchestration, and enterprise observability. Most organizations end up using both: Copilot Studio for the 80% of high frequency, lower complexity interactions, and Foundry for the complex reasoning workflows that sit behind them.

How long does it typically take to deploy a production ready AI agent?

Timelines vary by complexity, but the pattern for successful deployments is: 2 to 4 weeks for data and governance preparation, 4 to 6 weeks for pilot build and testing in a controlled environment, 2 to 4 weeks for evaluation and feedback integration, and 2 to 4 weeks for staged rollout to the full target user group. Total: 10 to 18 weeks from kickoff to production for a well scoped first agent. Organizations that try to compress this timeline, especially by skipping the governance and evaluation phases, are the ones represented in Gartner’s 40% failure projection.

What industries are seeing the fastest ROI from Agentic AI today?

Based on Microsoft deployment patterns and Gartner sector research, the highest early ROI is appearing in IT operations through agent driven incident detection and resolution, customer service through autonomous tier 1 resolution, finance through automated reconciliation and compliance checks, supply chain through real time inventory and logistics decision support, and healthcare operations through administrative workflow automation. Cloud 9 has deployed agentic solutions for clients in healthcare, financial services, and professional services.

What is "agent washing" and how do we avoid it?

Gartner warns that many vendors are rebranding existing chatbots, RPA tools, and AI assistants as “agents” without delivering true autonomous, multistep reasoning capabilities. Gartner estimates only about 130 of thousands of vendors claiming agentic AI are building the real thing. To avoid it: ask vendors to demonstrate a live agent completing a multistep task across two or more real systems. If the agent only responds to prompts without taking autonomous actions, it is not agentic.

Recent Posts

Latest Blogs

25 Microsoft Teams Tips & Tricks Every Business Professional Should Know

Learn how Microsoft 365 Copilot Agent Builder templates help enterprises build smarter AI agents faster using no-code workflows, SharePoint integration, and Microsoft 365 Copilot for scalable productivity and automation.

Microsoft 365 Copilot Agent Builder Templates: How to Build Smarter, Faster AI Agents

Learn how Microsoft 365 Copilot Agent Builder templates help enterprises build smarter AI agents faster using no-code workflows, SharePoint integration, and Microsoft 365 Copilot for scalable productivity and automation.

How Managed Cloud Services Improve Security, Performance, and Uptime

Discover how managed cloud services improve cloud security, performance, and uptime through proactive monitoring, optimization, and Microsoft-aligned operational practices. Learn how businesses reduce downtime, strengthen security posture, and optimize cloud environments with expert MSP support.